A retrospective from someone who lived through the chaos, one CSS hack at a time

The Year Everything Was On Fire

By 2005, I'd been building websites professionally for nearly a decade. I'd survived the pager era, the dot-com bust, learned to stop using tables for layout, and thought I'd seen the worst the web could throw at me.

I was wrong.

Internet Explorer 6 ruled the web with an iron fist, somewhere around 85% market share. Microsoft hadn't released a major browser update since 2001 and showed no signs of caring. Why would they? They'd won. Netscape was on life support with barely 1% of users still clinging to it like passengers on a sinking ship. And the rest of us? We were caught in the crossfire.

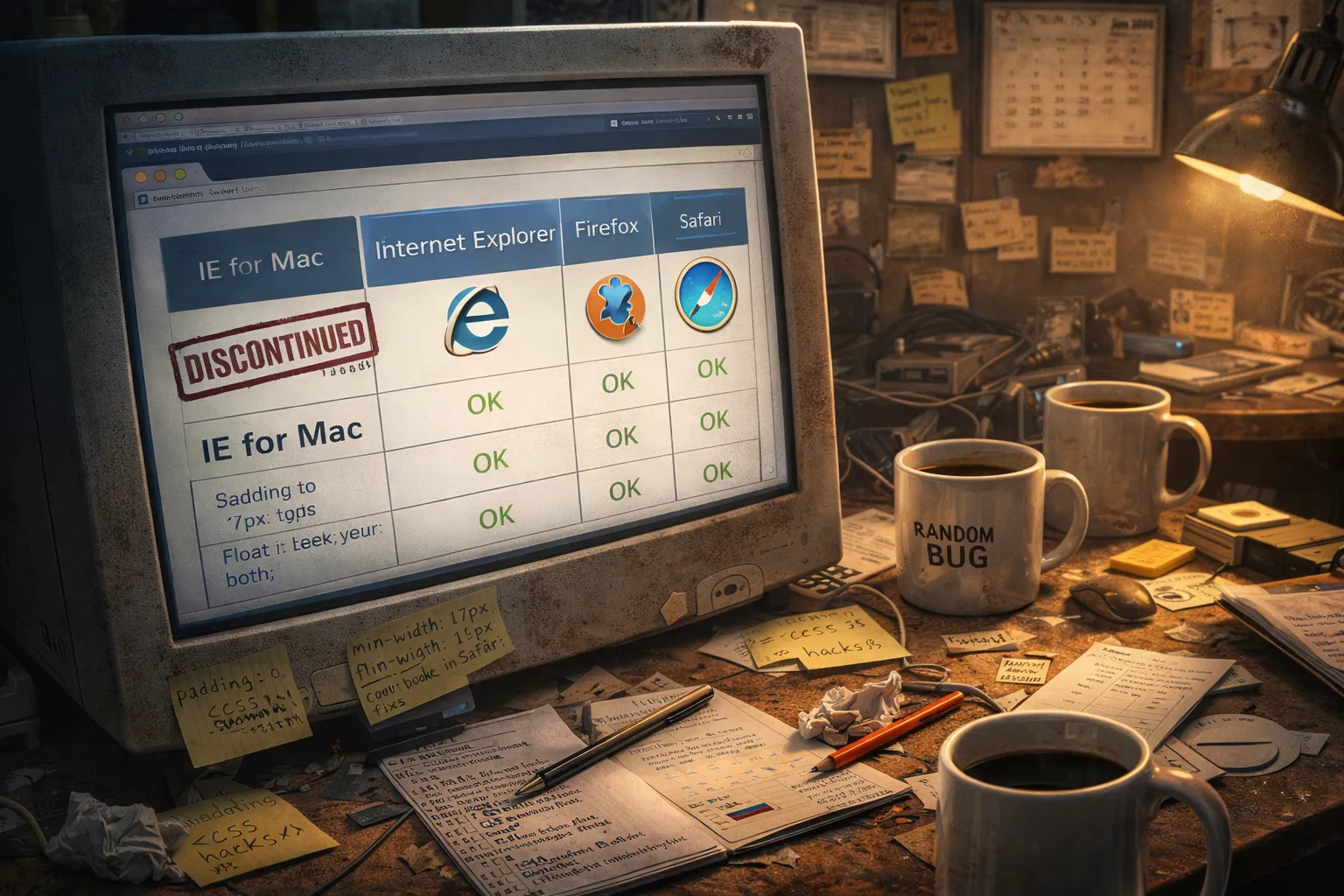

Every project became an exercise in writing the same code three different ways. First, you'd write CSS the way it was supposed to work (assuming you weren't relying on FrontPage or Dreamweaver to generate it for you). Then you'd write a version for IE6 using conditional comments, proprietary filters, and prayers. Then you'd add JavaScript hacks to fix things like PNG transparency, because apparently displaying an image with a transparent background was too much to ask of the world's most popular browser.

/* The hat trick of desperation */

.element {

background: url(image.png); /* For browsers that aren't broken */

_background: none; /* IE6 underscore hack */

_filter: progid:DXImageTransform.Microsoft.AlphaImageLoader(src='image.png'); /* IE6 PNG fix */

}Designers would hand me beautiful Photoshop mockups on their Macs, viewed in Safari. The client's IT department ran IE6 on Windows XP. The CEO's nephew was still using Netscape 7 because "it works fine." And somehow, my job was to make a single website look identical in all three. A task roughly as achievable as making water flow uphill.

"Pixel perfect," they'd say.

I learned to hate those words.

The First Signs of Hope

In late 2004, something remarkable happened: Firefox 1.0 shipped. Born from the ashes of Netscape's open-source Mozilla project, it was fast, standards-compliant, and refreshingly not terrible. Web developers adopted it immediately. Not because we loved Firefox, but because we were desperate for anything that wasn't IE6.

Meanwhile, Apple had been quietly building Safari since 2003. For Mac users, it was a revelation. For Windows developers like me, it was another browser to test, but at least it mostly followed the rules. Safari didn't try to reinvent CSS or ignore half the JavaScript specification. It just... worked.

Then there was Opera. Poor, beautiful, misunderstood Opera.

The Norwegian browser had been around since 1995, pioneering features like tabbed browsing, pop-up blocking, and mouse gestures. Innovations that other browsers would eventually steal wholesale. But Opera never broke 3% market share. It was the Betamax of browsers: technically superior, commercially irrelevant. Still, we had to test for it. Someone's client was always "an Opera user," and that someone was always the person who signed the checks.

By 2006, the battlefield looked like this: IE6 was the entrenched dictator, Firefox was the scrappy resistance, Safari controlled the Mac highlands, and Opera was the lone sniper nobody could quite locate.

And Netscape? Netscape was already dead. It just didn't know it yet.

The Giants Begin to Fall

June 2003 marked the beginning of the end for one front in the browser wars. Microsoft announced it was ending support for Internet Explorer on Mac. The final update shipped in July 2003, and support ended in December 2005.

For Mac developers, this was liberation. Safari became the default, and suddenly the Mac web made sense. For those of us building cross-platform sites, it meant one less browser in the testing matrix. A small mercy in an ocean of chaos.

October 2006 marked the first real movement from Microsoft in five years: the release of Internet Explorer 7.

Let that sink in. Five years without a major update to the world's most-used browser. In web development terms, that's approximately seventeen geological epochs.

IE7 finally added tabbed browsing, a feature Firefox had shipped two years earlier. It improved CSS support, though "improved" is doing a lot of heavy lifting in that sentence. It was still broken, just broken in new and exciting ways. We traded our old CSS hacks for new ones and kept shipping.

But IE7 wasn't even the biggest news of the era.

A New Front Opens

June 29, 2007. Steve Jobs walks onto a stage in San Francisco and pulls a rectangle out of his pocket.

The iPhone.

In that moment, everything changed. Not because the phone itself was revolutionary (though it was), but because it carried Mobile Safari. A real web browser. On a phone.

Before the iPhone, "mobile web" meant WAP pages and stripped-down HTML that looked like a Geocities site from 1996. The iPhone rendered actual websites, the same sites we built for desktops, on a screen the size of a playing card.

And clients noticed.

Within months, I started hearing a phrase that would haunt me for years: "Have you checked how this looks on my phone?"

The worst part? They no longer checked their company's ancient Windows machines. The CEO's first impression of your new website wasn't on the carefully tested IE7 installation in the conference room. It was on his iPhone, squinting at text meant for a 1024-pixel-wide monitor crammed onto a 320-pixel screen.

We'd just opened an entirely new front in the browser wars. And we had no idea how to fight it.

The Death of Netscape (Finally)

March 1, 2008. AOL officially pulled the plug on Netscape, recommending users switch to Firefox or Flock. By then, Netscape had fallen below 1% market share. A browser so irrelevant that its death was less a tragedy than a mercy killing.

I should have celebrated. One less browser to test. One less set of hacks to maintain. One less client saying, "My sister uses Netscape, and she says the fonts look wrong."

But I barely had time to raise a glass before the next combatant entered the arena.

The Behemoth Arrives

September 2, 2008. Google released Chrome.

At the time, it seemed like overkill. Did the world really need another browser? Microsoft had IE. Mozilla had Firefox. Apple had Safari. Even Opera was still hanging around. The browser market felt crowded enough.

What we didn't understand was that Chrome was more than just a browser. It was Google's beachhead into your computer, a platform designed to run web applications as fast as desktop software. While IE was still struggling to render CSS correctly, Chrome was shipping a JavaScript engine (V8) that made web apps feel genuinely responsive.

Within nine months, 30 million people were using Chrome. By 2010, it had tripled to over 120 million.

The browser wars had a new superpower, backed by the biggest name in tech.

The Monster That Wouldn't Die

If there's one thing I'll never forget about the browser wars, it's this: IE6 refused to die.

We thought it would fade naturally. Firefox was growing. Chrome was exploding. Safari dominated mobile. But in corporate America, IE6 clung to life like a cockroach in a nuclear winter.

The problem was Windows XP. Enterprises had invested billions in XP deployments, and IE6 was baked into the operating system like mold in old bread. Corporate IT departments couldn't upgrade without breaking internal applications built specifically for IE6's quirks. So they didn't upgrade. And we kept writing hacks.

March 2011. Microsoft launched ie6countdown.com, a website specifically designed to shame IE6 into extinction. Microsoft. Begging people to stop using their own browser. If that doesn't tell you how bad things had gotten, nothing will.

Web developers worldwide sent flowers to mock funerals. We created countdown sites, death clocks, and increasingly desperate support banners. "Please upgrade your browser" became the most-typed phrase in web development.

January 2012. IE6 finally dropped below 1% market share in the United States. We celebrated as if the war were over.

It wasn't.

April 8, 2014. Microsoft ended support for Windows XP, and with it, finally, definitively, IE6. The browser that had terrorized us for thirteen years was officially dead.

But here's the kicker: IE7 was supported until 2017. IE8 until 2020. The monster just kept spawning sequels.

The Responsive Design Revolution

While we were fighting the IE wars, a quiet revolution was brewing.

May 2010. Ethan Marcotte published an article in A List Apart called "Responsive Web Design." In it, he outlined a radical idea: instead of building separate sites for desktop and mobile, we could build a single site that adapts to any screen size using fluid grids, flexible images, and media queries.

It sounds obvious now. At the time, it felt like heresy.

The old way was to build a website, then build a mobile version at m.yourdomain.com, then pray that nobody accessed your site on a tablet because you hadn't built a tablet version yet. Responsive design promised to end that madness.

By 2012, media queries were an official W3C recommendation. By 2013, Mashable declared it "The Year of Responsive Web Design." Suddenly, every client wanted their site to work on every device.

The problem? Responsive design was built on CSS3 features that not all browsers supported equally. Mobile Safari had viewport quirks. Firefox handled media queries differently from Chrome. And IE? IE was still IE.

We weren't just writing CSS hacks for different browsers anymore. We were writing them for different browsers on different devices at different screen sizes. The combinations were nearly infinite.

And the worst part? Clients had started checking their phones first.

I can't count the number of times I heard: "It looks great on my computer, but on my iPhone..." Those six words became the soundtrack of my nightmares. We'd spend weeks perfecting a desktop experience, only to hear that the CEO had glanced at it on his phone during a meeting and hated it.

The browser wars had gone mobile, and nobody was winning.

The Windows 10 Era: Edge of Desperation

July 2015. Microsoft released Windows 10 with a brand new browser: Microsoft Edge.

Edge was supposed to be Microsoft's fresh start. They'd built it from scratch with a new rendering engine (EdgeHTML), dropped all the legacy IE cruft, and finally promised to play nice with web standards.

One problem: IE11 still ships with Windows 10 for "compatibility." Corporate IT departments weren't upgrading to Edge. They were using the same IE11 they'd been using for years, which was only slightly less painful than IE8.

Edge Legacy (as it came to be known) was actually decent. Fast, reasonably standards-compliant, and blissfully lacking in the quirks that had defined Internet Explorer for decades. But it was too little, too late. Chrome had already won.

By this point, Firefox had peaked and begun its long decline. It hit around 32% market share in late 2009, but Chrome was eating its lunch. The scrappy resistance fighter that had saved us from IE's tyranny was now itself becoming irrelevant.

The Calm After the Storm

Somewhere around 2015, I realized something strange: I wasn't angry anymore.

The constant testing, the endless hacks, the late nights debugging CSS that only broke in one specific browser on one specific platform. It was fading. Not disappearing entirely, but fading.

Chrome dominated with over 60% market share. Safari on iOS had stabilized, its JavaScript quirks mostly ironed out. Firefox still existed, but had declined to the point where most clients didn't care about it. Even Edge was moving toward Chromium.

Responsive design had matured from a revolutionary idea into a standard practice. You didn't "make a site responsive" anymore. You just made a site. Mobile-first wasn't a strategy; it was the default.

Android browsers in US corporate environments? Basically a rounding error. Sure, there were outliers like somebody's CEO with a Galaxy phone who complained about touch targets, but those were the exceptions, not the rule.

My dev team had switched to Macs. Safari desktop had grown enough that we couldn't ignore it anymore, but Safari had also become good enough that we didn't have to fight it. We just... built websites.

The War's End

January 2020. Microsoft surrendered. Edge switched from its homegrown EdgeHTML engine to Chromium, the same engine powering Chrome.

Think about that for a moment. Microsoft, the company that had waged a decade-long war against Netscape, that had bundled IE so aggressively it faced antitrust lawsuits, that had refused to implement web standards because it didn't have to. That Microsoft was now using Google's browser engine.

June 15, 2022. Support for Internet Explorer 11 officially ended.

February 2023. IE11 was permanently disabled on Windows 10.

The browser wars were over. Chrome had won.

The Chromium Age

Look at the browser landscape today:

- Chrome: ~65% market share, Chromium-based

- Safari: ~18% market share, WebKit-based

- Edge: ~5% market share, Chromium-based

- Firefox: ~2.5% market share, the last independent holdout

- Opera: ~2% market share, Chromium-based

- Brave: Chromium-based

- Arc: Chromium-based

- Vivaldi: Chromium-based

The Chromium/WebKit family accounts for over 90% of browser usage. When I write CSS today, I'm essentially writing for one rendering engine with minor variations.

Some people worry about this monoculture. They remember what happened the last time one browser dominated the market: the five years of stagnation under IE6, the security nightmares, the death of innovation. And they're not wrong to worry. We've traded one kind of chaos for dependency on a handful of tech giants.

But from the trenches, I can tell you: the peace is nice.

I don't write CSS hacks anymore. I don't maintain browser-specific JavaScript files. I don't get emails from clients asking why the site looks "weird" in their nephew's browser.

I just build websites. They work everywhere.

Lessons From the Front Lines

Twenty-five years in the browser wars taught me a few things:

Monopolies breed stagnation. When IE controlled 95% of the market, Microsoft stopped innovating. It took Firefox, then Chrome, then the entire mobile revolution to shake them out of their complacency. Competition makes better products.

Standards matter. The web got infinitely better when browsers started following the same rules. The W3C, WHATWG, and the open-source community deserve more credit than they get.

Mobile changes everything. The iPhone didn't just add a new screen size to support. It fundamentally changed how people experience the web. The companies that adapted survived. The ones that didn't are memories.

Users don't care about browsers. They never did. They just want the internet to work. Every hour we spent debugging IE6 hacks was an hour we couldn't spend making something actually good.

And finally: The wars end, but the work continues.

The browser wars shaped an entire generation of web developers. We learned to test obsessively, to question everything, never to assume code would work the same way twice. Those instincts don't disappear just because the fighting stopped.

The next battle is already brewing. AI browsers are emerging. Privacy concerns are reshaping how we track and target users. WebAssembly is changing what's possible in a browser. The metaverse (whatever that actually means) lurks on the horizon.

We're not done yet.

But for now, I'm going to enjoy the peace. Open a beer, load a website, and watch it render the same in every browser.

After everything we went through, that still feels like a miracle.

Shane Larrabee has been building websites since 1994 and somehow still thinks it's fun. He is the founder of FatLab Web Support, where he helps organizations maintain their WordPress sites without losing their minds. He has never once missed IE6.