I've been running FatLab Web Support for about fifteen years. In that time, I have tried and failed at SEO more times than I can count. My annual resolution to publish a blog post every week has died in February every single year. I have hired a content firm, swore off AI slop, and then produced AI slop anyway. I have spent thousands of dollars on tools I didn't know how to use.

Here's the honest context for why SEO has been such a consistent failure of mine: I'm a web developer. I write code for a living. I'm not a writer, and I'm not a trained SEO professional.

The skills it takes to be a good content marketer, consistent writing output, keyword research intuition, understanding search intent, and editorial discipline are not skills that come naturally to me. They never have. For a long time, I assumed that meant this whole category of work just wasn't for me, and I accepted that trade-off because networking was keeping the business healthy enough.

Then, starting about six months ago, something changed. Our traffic has roughly quadrupled since. I've picked up three new clients in the last month alone. This is the best content marketing result I have ever seen, by a wide margin, from a little agency that had basically given up on the whole thing.

This post is the story of how that happened, and the system I built to produce content that actually ranks, gets cited by AI tools, and doesn't read like every other piece of AI-generated filler on the internet.

A friend of mine who runs another agency asked me to write this up so he could try it too. If you're in the same boat, this one's for you.

The Fifteen-Year Record of Failure

Here's what our traffic looked like for fifteen years.

At our absolute peak in the early days of our SEO efforts, we pulled in around 500 visitors per month. As Google got smarter, the trend went the other way. For most of our history, we averaged 300 to 500 monthly visitors, and the leads from that traffic came in at a rate of maybe four qualified prospects per year. Four. Per year.

The business didn't die because networking carried us. Referrals, partnerships, relationships, and a reputation for doing good work kept the lights on. SEO was this thing I knew I should care about, tried to care about, and kept bouncing off of.

Every year, I'd tell myself this was the year. I'd write out a list of ten blog post topics. I'd bang out eight of them in a sprint, then a client project would blow up, and the last two would sit in a drafts folder forever. I once committed publicly to writing a post a week for a year. I made it to about the end of February.

At one point, I gave up on doing it myself and hired a content firm. They wrote me 500- to 1,000-word generic how-tos, one a week. They were fine. They were also indistinguishable from the other ten thousand generic how-tos on the same topic.

Nothing ranked. No leads came in. I let them go after a few months, probably sooner than I should have, but I couldn't justify the spend against the zero results.

I bought every SEO tool you can think of. SEMrush. Screaming Frog. Ahrefs. More than once. Each time, I convinced myself the tool would be the thing that cracked it. Each time, I ended up staring at dashboards full of data I didn't really know what to do with.

Estimated search volume doesn't mean traffic. Keyword difficulty is abstract until you understand your own domain authority. I was paying for intelligence I couldn't act on.

This was the pattern for fifteen years. Big push, fizzle, guilt, give up, try again next year. If you're running a small agency or a consulting practice and this sounds painfully familiar, I'm with you. It was a running joke in my head, and when I mentioned it to other business owners, most of them laughed uncomfortably because they're living the same cycle.

The AI Slop Detour

About a year and a half ago, I thought I'd finally cracked it. The tools had gotten good enough that I could automate the whole thing.

I built a system with Make.com that fed topics from a Google Sheet into Perplexity, which wrote full articles. Make.com then generated a featured image and published the post directly to our site. I was so pleased with myself. It felt like cheating for about a week.

Over the following months, that system produced somewhere between 150 and 200 blog posts. It was a cool technical achievement. And looking back, a year and a half ago, Claude Code wasn't really out yet, Perplexity was genuinely impressive, and this kind of automated pipeline was cutting-edge.

The posts didn't rank. Not one meaningful position.

They got impressions. I now know those impressions were coming from ranking on page four or five, where nobody clicks. The articles were factually okay, reasonably well written, and completely, thoroughly generic.

There was no personal experience in them. No opinion. No specific client story. Nothing that made them mine, and more importantly, nothing that signaled Experience, Expertise, Authoritativeness, or Trustworthiness to Google. I'm not an SEO expert, but Google clearly knew exactly what was going on.

Six months ago, I deleted all of them. Every one.

That was painful. It was also freeing. I had proven to myself that the problem was not effort, not volume, not even technology. The problem was that none of those posts had any of me in them.

The Lightbulb Moment

The shift happened when I started getting comfortable with Claude Code.

I was using it for development work, and I had this realization: this tool can actually know things about my company and me. I can feed it my services, my case studies, my perspective, my voice. And then it can ask me questions, real questions, about what I actually think on a topic, what I've actually seen in client work, what I'd actually say to a prospect.

And once it has those answers, it can write.

That was the unlock. We already knew AI could generate blog posts. We knew those posts were slop. The missing piece was a structured way to scale the author's experience into the writing process, without the author having to sit down and draft every post from scratch.

I started building a workflow around that idea. Research first, then a structured interview with me, then drafting. The first time I produced a full topical cluster, roughly ten connected blog posts covering one area of our business, in a matter of hours, I knew I was onto something.

What's Actually Happening Now

Six months in, here's what the numbers look like. These are screenshots straight from my Ahrefs account.

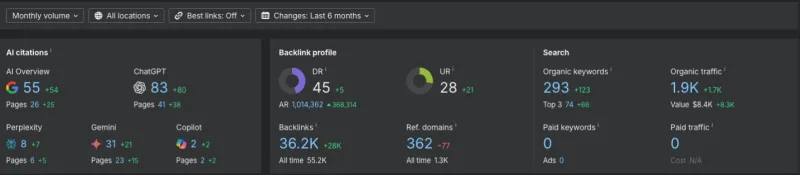

First, a six-month snapshot of AI citations, backlinks, and search visibility:

The leftmost panel is the one that still surprises me. We went from effectively zero AI citations to a substantial, consistent number. Our content is being referenced by AI search tools, Perplexity, ChatGPT's web search, and Google's AI Overviews in a way that nothing we've ever published has been.

I think this is the E-E-A-T signal paying off. AI search seems to reward the same first-hand experience markers that Google does.

The middle panel shows that our domain rating has increased by roughly 5 points over the past 6 months. The right panel shows search visibility going from near zero to a healthy, sustained number.

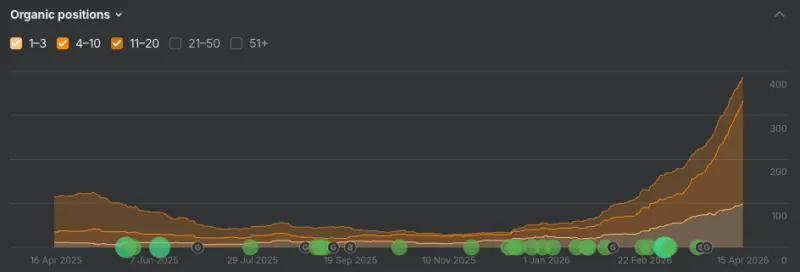

Now zooming out to a full year of organic keyword positions:

The spike at the end is what topical authority looks like when it actually kicks in. Not a smooth climb, but a step change, as the clusters start to reinforce each other through internal linking and topical depth.

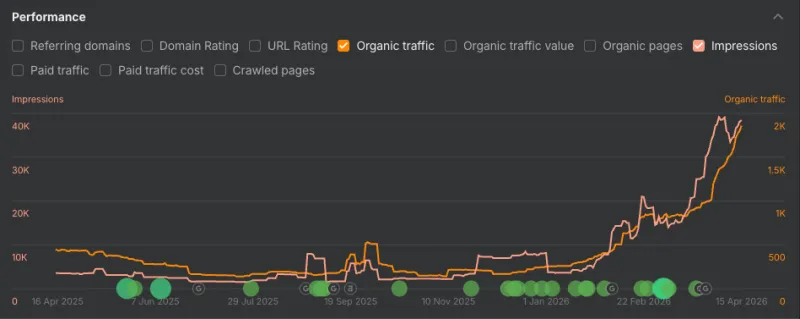

And the same one-year view for traffic and impressions:

The last three months are where it stopped being theoretical.

Translated into business terms:

- We've gone from 300 to 500 visitors per month to around 2,000

- I've picked up three new clients in the last month, and six qualified leads in the last two months

- Google Search Console, which I ignored for fifteen years because it was always bad news, is now the first dashboard I check in the morning

Over the six months, I've published more than 300 blog posts using this system. The comparison to the Perplexity experiment is stark. More posts, and unlike the last batch, these actually rank and convert.

The Stance on AI Slop

I want to be clear about where I come from on this, because I've seen both sides.

The reason I'm allergic to AI slop is that I produced a pile of it myself. I know exactly how tempting it is to automate the whole thing. I know how cool it feels to watch a pipeline publish articles while you sleep. And I know how empty the results are.

The slop problem isn't that AI wrote it. The slop problem is that nothing human went into it. No opinion, no experience, no specific story, no judgment call anyone would stand behind. Google can tell. AI search tools can tell. And readers, when they do land on the page, can tell within a paragraph.

Using AI to write is fine. Using AI to write without putting yourself into the process is what makes slop. The system below is built entirely around solving that one problem.

The System

Here is the workflow, phase by phase. A friend of mine asked me to write this so he could follow along, so I'm going to be specific enough that you can actually do this, while keeping it light on the ceremony.

You'll need Claude Code, Ahrefs or a similar keyword tool, and a dictation tool like SuperWhisper or MacWhisper (you can type, but dictation makes this dramatically better). I run this in the terminal version of Claude Code, but Claude Code on the web or the desktop client works just as well, and you could probably adapt this to ChatGPT or another capable AI agent.

Phase 0: Cluster Discovery

Before you write anything, you need to know what to write about. A cluster is a group of related blog posts, one broad hub article, and several narrower spokes, all linking to one another.

I open a fresh Claude session and feed it my sitemap, a description of my business, and any existing content inventory. Then I ask it to research online and propose content clusters that would make sense for my business. I review, push back, and lock in the ones I want to tackle.

As a concrete example, clusters we've published over the last six months include WordPress security plugin reviews, SEO plugin reviews, caching plugins, page builders, site migration services, hosting for different types of organizations, and a nonprofit/association vertical. Each one has a hub article and anywhere from three to thirteen spokes.

Phase 1: Strategy Document

For each article in the cluster (the hub and each spoke), produce a short strategy document before writing anything.

Claude proposes candidate key phrases for each article, and across a full cluster, these often add up to 30 to 50 phrases in total. You run them through Ahrefs and feed the volume, difficulty, and traffic potential back.

Critically, filter those phrases against your own domain rank. A keyword with a difficulty of 60 is irrelevant if your domain doesn't have the authority to compete for it. Aim for phrases where difficulty sits comfortably below your domain authority with room to spare. As your domain rank grows, the ceiling rises.

One important note: sometimes a spoke topic you want to cover has little or no search volume. Write it anyway. These pieces earn their keep as authority-backing content. They deepen the cluster, demonstrate expertise, and give you something concrete to link to in sales conversations. And as your domain rank climbs, they increasingly get picked up for long-tail queries you never targeted.

The strategy document is the contract for the article. Lock it before writing so you don't have to re-argue the direction mid-draft.

Phase 2: Research

With strategy documents for every article in the cluster in hand, start a fresh Claude Code session and hand over the package. Explain you're writing a topical cluster, here's the hub, here are the spokes, here are the strategy docs.

Ask for comprehensive research, and be specific about what that means. Feature tables when comparing tools. Service and pricing tables when the topic touches competitors. Cited references and source links for claims and statistics. Timelines or version histories when relevant. If the research comes back reading like a ChatGPT summary, it isn't comprehensive enough. Push back.

Then ask for competitive research, with a specific framing. Don't just find gaps in the top-ranking pieces. Find gaps that align with your business, experience, and services. A gap you have no real authority to fill is not an opportunity, it's a trap. Make that filter explicit in the prompt.

Phase 3: The Interview

This is the most important phase in the entire system. If you skip or rush it, everything that follows will sound like every other piece of content on the internet. It's also where your E-E-A-T signal gets built. First-hand experience, specific judgment calls, and real client scenarios. These are the things AI filler cannot fake, and they only come out of your head.

With the research in context, ask Claude to generate 10 to 20 interview questions drawn from the research and strategy docs, covering the hub's broad topic plus the specific angles of each spoke. You want material for every article in one session.

Dictate your answers, don't type them. This is the single biggest upgrade I've made to the process. Use Claude's voice input, SuperWhisper, or MacWhisper.

Treat it like a real interview. Someone is asking you questions out loud, and you're answering out loud. Ramble. Contradict yourself. Drop in half-finished thoughts. That is exactly the raw material you want, and it's the stuff you'd never produce at a keyboard.

When you're done, ask Claude Code to polish the transcript, organize it by topic, and pull out specific quotable lines and anecdotes that could anchor sections of the final posts.

Plan on 30 to 60 minutes per cluster. It feels slow. It is the most valuable time you will spend on the content, by a wide margin.

Phase 4: Write the Hub First

Always the hub before the spokes. The hub is the anchor. It defines the cluster's scope and positioning. A weak hub infects every spoke that links to it.

Phase 5: Write the Spokes

Each spoke draws from the same research and interview transcript. Sometimes I have Claude write the entire cluster back-to-back in a single session, which collapses the writing phase into an afternoon. That works best when the interview material is rich, and the strategy is locked. For anything where I'm likely to wrestle with the angle, I still write spokes one at a time.

Phase 6: Refinement

Once the cluster is drafted, run a single refinement pass across all articles with the whole cluster in context. Ask Claude to review for:

- Writing quality and structure. Paragraph length, heading hierarchy, flow

- Formatting. Places where a bullet list, bold phrase, or pull-quote would help scannability

- FAQ sections where they genuinely add value, based on the research

- AI signals. Scrub em-dashes, en-dashes, and the telltale adjectives that mark AI writing ("delve," "seamless," "robust," "leverage," and so on)

- SEO review against the original strategy docs. Title tag, meta description, primary keyword in headings and early body copy, secondary keywords worked in naturally

Beyond this point, more human editing is always good (hello Grammarly). In practice, most articles are ready to publish after the refinement pass, unless it's a particularly personal piece where I want to sit with it longer.

Phase 7: Image Generation

Three reasonable options: your own photography or art, stock photography, or AI-generated images. In my experience, Google's Gemini produces the featured image plus several inline images with the best results for our blog, but any approach can work. Keep the images literal. Show the actual thing the section is about rather than abstract metaphors.

Phase 8: Publication

Publishing mechanics depend on your CMS, so that part is yours. Two things matter regardless of platform:

Internal linking is how the cluster becomes a cluster. Every spoke links back to the hub. The hub links out to every spoke. Spokes link to each other naturally. Without this, you've just published a pile of related articles, not a topical cluster.

Scheduling is a content strategy call. I publish the entire cluster at once so I can set up all the internal links in one pass, and the cluster signals topical depth immediately. Scheduled rollouts are also reasonable if you want a steady publishing cadence. Pick what fits your strategy.

What Actually Made the Difference

Looking back at fifteen years of failed SEO and six months of something actually working, a few things stand out:

The interview is non-negotiable. Every generic AI-written post I've produced in my life has failed. Every post with my experience in it has performed. It's not subtle.

Cluster discipline beats volume. Three finished clusters outperform thirty scattered posts. Topical authority is cumulative, and internal linking only works if the pieces actually connect.

Strategy documents prevent drift. They sound like bureaucratic overhead. They prevent the mid-draft direction changes that used to burn half my writing time.

AI makes the data legible. The tools I bought over the years were always capable. I was the bottleneck. Claude Code reading Ahrefs and Google Search Console data alongside my business context is what finally made keyword research feel actionable instead of abstract. I'm still not an SEO expert. I don't need to be.

Don't publish slop, even if it's easy. I know. I did it. Google knew.

Where This Leaves Me

I'm the last person who should be writing a content marketing success story. I've run my business on networking for fifteen years. I've avoided SEO. I've given up on blog schedules more times than I can count. I have, quite literally, deleted two hundred of my own articles.

Six months into this system, our traffic is the highest it has ever been in the company's history. Leads are coming in from content for the first time at a meaningful rate. And I actually check Google Search Console in the morning, because it's finally giving me good news.

If you're a fellow agency owner or consultant sitting on years of real experience but struggling to translate it into content that ranks, this is the system I'd hand you. It isn't magic. It's a structured way to get your actual expertise onto the page, at a pace you can sustain, without producing slop.

Try it for one cluster. Give it the interview phase it deserves. See what happens.

If you want to talk through how this might fit your business, or you'd like help setting up the system, reach out. I'm happy to share what's working.

Frequently Asked Questions

How long does one cluster take, start to finish?

Honestly, about three hours. That's a cluster with a hub and anywhere from 6 to 13 spokes, from strategy docs through a completed first draft of every article. Strategy and keyword review take the first chunk; the interview runs for 30 to 60 minutes; and then Claude drafts the whole cluster in a single batch session, based on the research and interview transcript. Refinement adds a bit more on top.

I know three hours sounds aggressive for 10-plus blog posts, and it did to me the first time, too. The honest answer is that once the strategy docs, research, and interviews are in place, the drafting is fast. All the upfront work makes drafting fast, and the quality makes it worth doing at all.

What tools do I actually need to run this?

Claude Code (terminal, desktop app, or web), a keyword research tool like Ahrefs or SEMrush, and a dictation tool like SuperWhisper or MacWhisper if you can swing it. That's the core stack. Everything else is optional. No content calendar software, no paid SEO platforms beyond the keyword tool.

Do I need to use Claude specifically?

No. I happen to use Claude Code because it works well with local files, can read multiple strategy documents at once, and handles long context well. ChatGPT or another capable AI agent could be adapted to run a similar workflow. The system is the valuable part; the specific AI model is a detail.

Does this work if I'm not the subject matter expert?

Honestly, no. The whole thing is built around extracting your lived experience. If you don't have real experience in the topic, you won't produce useful interview material, and the drafts will revert to generic. If you're in a field you don't know well, either learn it first or partner with someone who has the expertise and run the interview phase on them.

How many articles should I publish before expecting results?

In my case, the traffic curve started bending up around months three to four of consistent cluster publishing. I had published maybe 100 posts by then across several clusters. That said, I had a weak domain to start with. A stronger domain should see movement sooner. Either way, think in terms of clusters completed, not individual posts published.

What if my topic has no search volume?

Write it anyway if it matters to your business. Low-volume posts still reinforce topical authority, demonstrate expertise to readers and AI search tools, and give you something to link to in sales conversations. As your domain rank climbs, they tend to pick up long-tail traffic you never targeted.

Is this going to stop working when everyone does it?

Probably not the way you'd expect. The system itself is replicable. The interview material at its heart is not, because your experience is yours. As long as the output reflects genuinely first-hand expertise that other people can't fake, it should hold up against search engine and AI quality filters that are already trending in this direction.